By the time a paradigm shift reaches consensus, it feels like the most obvious thing in the world.

Analysts upgrade in unison. CNBC runs the ticker in the lower third. Your brother-in-law mentions it at Thanksgiving. The narrative has gone from "that won't work" to "of course it works—everyone knows that."

This is Stage 3. And it's where most investors finally show up.

The temptation, at this point, is to assume the story is over. The early believers got rich, the skeptics got humbled, and the rest missed it. That assumption is wrong.

The Day Everybody Agrees

On November 21, 2023, Nvidia reported quarterly data center revenue of $14.5 billion—a 279% year-over-year increase. The stock gapped up. Analysts scrambled to raise price targets. The word "AI" appeared in approximately 100% of financial media that week.

This was the consensus moment for artificial intelligence.

The core thesis around AI was no longer debated. Every major investment bank had an AI research vertical. Every corporate earnings call mentioned it. The S&P 500's performance was increasingly driven by a handful of companies positioned at the center of the AI supply chain.

And yet.

Between that consensus moment in late 2023 and the end of 2025, Nvidia's stock roughly tripled again. The AI infrastructure buildout accelerated, rather than slowed, when consensus arrived. Hyperscaler capex grew larger, not smaller. New model architectures demanded more compute, not less.

So, why didn't the efficient market already price that in?

Here's what consensus usually gets right: the core technology works. Here's what it consistently gets wrong: the scale of what it enables.

Why Consensus Undercounts

Once a technology achieves consensus, analysts model its impact within the existing market framework. They extrapolate from current adoption curves and known use cases.

This is reasonable. It's also—almost structurally—an undercount. Paradigm shifts always expand the addressable market in unforeseen ways.

The smartphone is the classic example. By 2010, the consensus was in. Apple had sold over 70 million iPhones. Android was scaling. BlackBerry's obituary was being drafted. The smartphone had "won."

Analysts modeled the smartphone replacing the feature phone, a one-for-one substitution across a global handset market of about 1.2 billion units per year.

What they didn't model was the smartphone also replacing the point-and-shoot camera, the GPS unit, the MP3 player, the alarm clock, the flashlight, the boarding pass, the wallet, the newspaper, and the personal computer for more than a billion people who had never owned one.

In 2024, the Apple app store enabled nearly $1.3 trillion in global billings and sales. That market didn't even exist in 2007.

Every Consensus Creates New Footnotes

Artificial intelligence is now consensus. Hyperscalers have committed hundreds of billions in capex. Every major power in the world now has an AI policy. The "will AI happen?" debate is settled.

But that consensus immediately surfaces a new set of problems:

Firm Baseload Power

AI data centers consumed an estimated 460 TWh of electricity in 2024. By 2030, that figure is projected to approach 945 TWh, or roughly the entire electricity consumption of Japan. The existing grid wasn't built for this.

In parts of the U.S., utilities have already paused new data center interconnections because there isn't enough power to go around. Retail electricity prices have climbed nearly 40% since 2021, with data centers a major driver.

Solar and wind are cheap, but they're intermittent. A data center running a frontier AI training run can't pause when the wind dies. It needs firm power—electricity that shows up every hour, rain or shine. Natural gas provides that today but at an unsustainable carbon cost.

Power was abundant. Now it isn't.

This single constraint is spawning an entire ecosystem of footnotes.

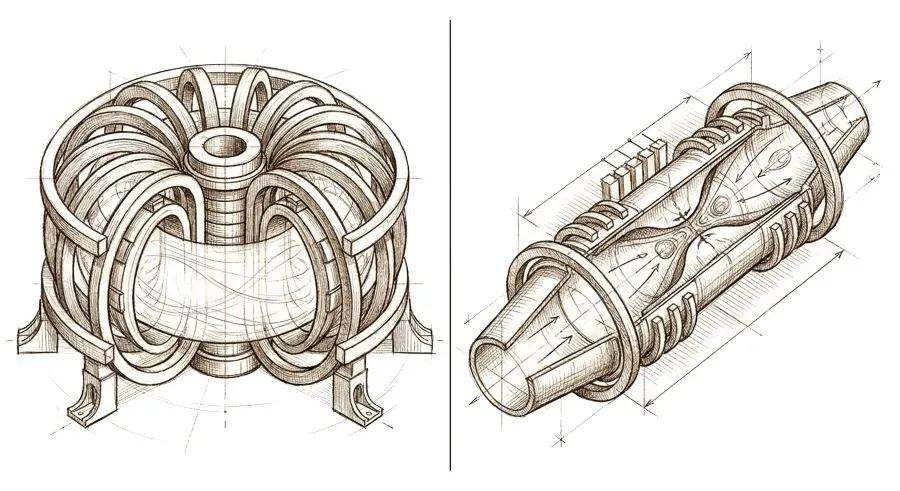

Small modular reactors (SMRs) trade the gargantuan scale of traditional nuclear plants for factory-built, modular units that can be deployed incrementally.

Enhanced geothermal systems (EGS) apply horizontal drilling and hydraulic fracturing techniques, borrowed directly from the shale revolution, to engineer geothermal reservoirs in hot rock formations where none exist naturally.

Fusion, the "holy grail," is no longer dismissed as thirty years away. Big tech buyers have signed power purchase agreements targeting the early 2030s. Engineering challenges remain, but commercial reactors are already in development.

And of course, long-duration energy storage (LDES) serves as a hedge against timeline slippage for all the above.

If any of these approaches reach commercial scale, the energy constraint that bottlenecks AI disappears.

Photonic Computing

Model parameters have grown over 34x in four years. Cluster sizes have grown 10x. But interconnect bandwidth has only improved 8x. Copper traces on a circuit board can only carry so much data so fast before they hit thermal and electromagnetic limits.

So while steady progress in GPU transistor density got us to today, the new bottleneck is data movement.

In a frontier AI training cluster, GPUs sit idle for large fractions of their cycle time, waiting for data to arrive from neighboring chips. The faster the processors get, the worse this ratio becomes.

Photonics addresses this at the level of physics. Light travels faster than electrical signals, generates virtually no heat, and can carry multiple data streams simultaneously.

This is still a laboratory-to-factory story. Optical components are harder to miniaturize and mass-produce than silicon transistors. But the structural logic is sound: if the limiting factor on AI scaling is moving data between chips, and light moves data better than electricity, then photonics is the next architectural shift hiding behind a manufacturing problem.

Neuromorphic Chips

Under the hood, current AI chips basically run on brute force. Every input gets processed through every layer of the network, every cycle, even if nothing changes. It's like reading the entire newspaper every second to check if a single headline has updated.

Neuromorphic computing aims to mimic how biological brains actually work. Instead of processing data in fixed frames synchronized by a central clock, neuromorphic chips fire digital "spikes" only when new information arrives.

Neurons that have nothing to report stay silent and consume no power. This is how your brain runs on just 20 watts while performing feats that the world's largest data centers can barely approximate.

Despite being in their infancy, neuromorphic systems have already demonstrated AI inference at up to 100x less energy and 50x greater speed than conventional embedded processors on certain workloads.

The Three Traps of Stage 3

Consensus is safer than the Footnote. It's more comfortable than the Inflection. That comfort breeds its own set of mistakes.

Trap #1: Mistaking consensus for a ceiling.

When an analyst says a stock is "priced for perfection," they're usually saying the consensus scenario is fully reflected in the valuation. That's often correct. What's missing is that the consensus scenarios are almost always too narrow.

Nobody in 2015 had "Nvidia becomes a $3 trillion company" in their model. The use cases that drove Nvidia to $3 trillion hadn't been invented yet. The consensus at the time—gaming GPUs with a growing data center business—was accurate. It was also about one-tenth of the eventual story.

When the total addressable market is expanding faster than the consensus can model it, "priced for perfection" is a misleading frame.

Trap #2: Buying narrative, not structure.

The consensus stage is when stories get loudest. Every company in the sector has an "AI strategy." Every earnings call mentions the paradigm. Every venture pitch deck cites the same McKinsey report on the multi-trillion-dollar opportunity.

Narrative abundance is a feature of consensus. Signal clarity is not.

Discipline here is key: Which companies have actual revenue tied to the paradigm? Which have pricing power in their specific niche? Which are riding structural demand, not speculative?

The dot-com era is the cautionary tale. The internet was the right paradigm, but Pets.com was the wrong expression of it. During the consensus, the gap between the best and worst investments within a paradigm widens dramatically.

Trap #3: Ignoring the new footnotes.

Consensus is not the end of the cycle. It's the point at which the cycle reproduces.

In 2012, most people were laser-focused on the mobile computing consensus. That’s why barely anyone noticed a small team at the University of Toronto using gaming GPUs to obliterate an image-recognition benchmark.

That footnote became the most important technology story of the next decade.

The Cycle Continues

We've walked through three stages—Footnote, Inflection, Consensus—as if they're a neat sequence. In practice, they're a spiral.

Every paradigm shift, upon reaching consensus, destabilizes adjacent systems in ways that create new footnotes. Those footnotes, if the science is real and the cost curves bend, become inflections. The inflections mature into new consensus. And the cycle continues.

Somewhere inside today's AI consensus, a technology that will define the 2030s is sitting in a research paper, or a patent filing, or a line item hasn’t been broken out yet. It looks like a footnote. It is one.